Originally launched by Anthropic in November 2024 and quickly adopted across the industry (with AWS joining the steering committee and major frameworks adding native support), MCP was designed as a “USB-C for AI applications.” While its primary role is connecting agents to external data sources and tools, a powerful pattern has emerged: Agent-as-a-Tool. This turns MCP into a practical protocol for true agent-to-agent (A2A) communication, enabling seamless delegation, context sharing, and coordinated workflows without custom glue code or vendor lock-in.

This article provides a complete introduction to MCP with a focus on its agent-to-agent capabilities. Whether you're building multi-agent systems with LangGraph, CrewAI, Google ADK, or Spring AI, understanding MCP will help you design more interoperable, scalable agent architectures.

What Exactly Is MCP?

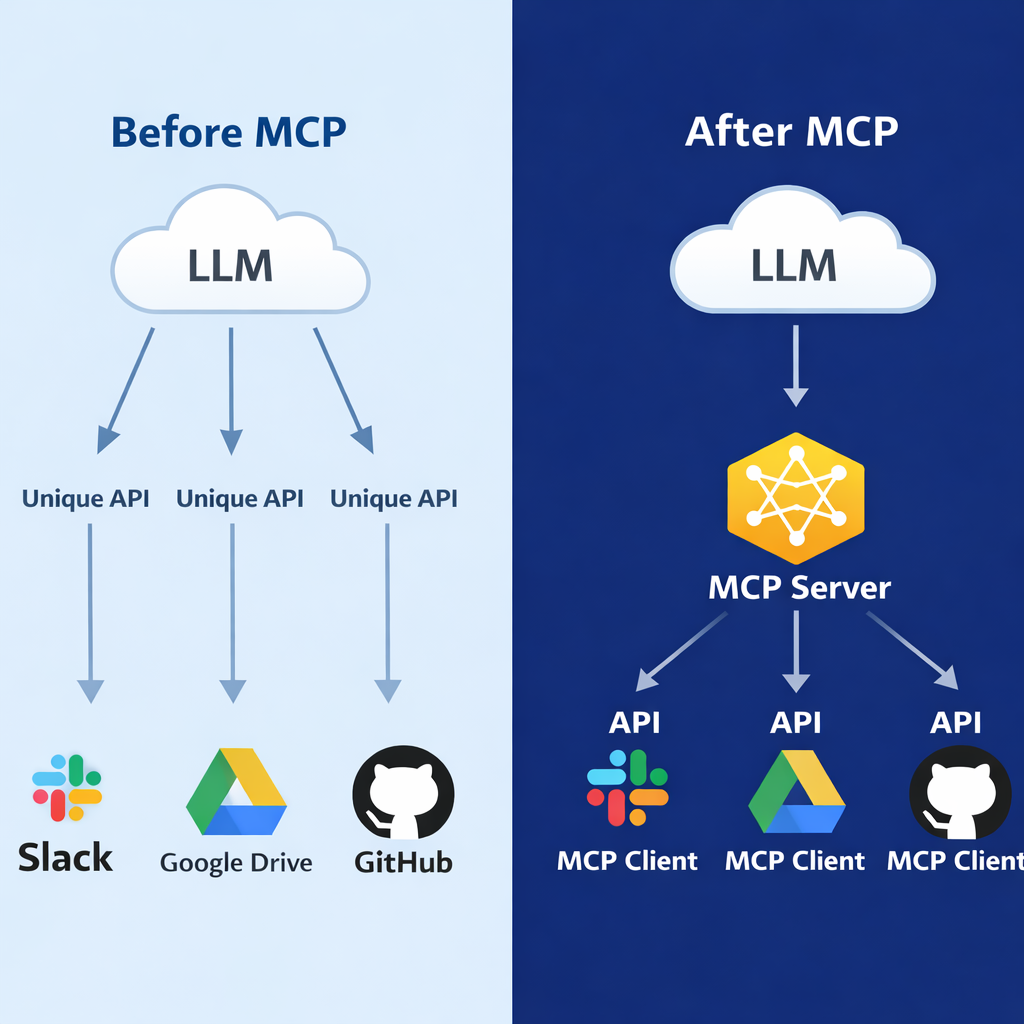

At its core, MCP is an open protocol (and accompanying SDKs in Python, TypeScript, Java, Go, and more) that standardizes how AI applications discover, connect to, and interact with external capabilities. Think of it this way: before MCP, every AI agent needed bespoke connectors for Slack, GitHub, databases, or other agents. MCP replaces that fragmentation with a single, consistent interface.

The protocol operates over modern web standards—primarily Streamable HTTP with support for Server-Sent Events (SSE)—making it lightweight, real-time, and firewall-friendly. It defines three main types of capabilities an MCP server can expose:

Resources: Readable data (files, database records, documents).

Tools: Executable functions (API calls, calculations, or entire sub-agents).

Prompts/Sampling: Reusable prompt templates or even delegated LLM inference.

An MCP client (embedded in your main agent or host application) can dynamically list available capabilities, invoke tools, stream results, and maintain session state. Security is built-in with OAuth 2.0/2.1, scoped permissions, and support for human-in-the-loop approvals.

What makes MCP special for agent-to-agent scenarios is its flexibility. Any agent can expose itself as an MCP server, turning its skills into callable “tools” that other agents can discover and use at runtime.

The Architecture That Powers Agent Collaboration

A typical MCP setup involves:

MCP Server: A lightweight service that wraps capabilities (including another agent) and serves them over HTTP. It handles authentication, capability discovery, and execution.

MCP Client: Embedded in the calling agent. It connects to one or more servers, negotiates capabilities, and issues calls.

Host Application: The runtime environment (Claude Desktop, custom Python app, enterprise platform) that hosts the client.

Communication follows a clean request/response model with streaming support. A client first calls a “list tools” or “list resources” endpoint to discover what’s available. It then issues a CallToolRequest (or equivalent) with parameters. The server executes—potentially delegating to another LLM or sub-agent—and streams back results, partial updates, or artifacts via SSE. Sessions can be stateless for simple queries or stateful for long-running collaborations.

This design mirrors microservices but with AI-native features: capability negotiation (new tools appear automatically), context passing (files or structured data), and cancellation/timeout handling.

Agent-to-Agent Communication via the “Agent-as-a-Tool” Pattern

Here’s where MCP shines for pure agent collaboration. Instead of building a separate A2A layer, you simply expose one agent as an MCP tool. The calling agent treats it exactly like any other API—no special orchestration code required.

A concrete example from AWS’s reference implementation (using Spring AI):

@Tool(description = "answers questions related to our employees")

String employeeQueries(@ToolParam String query) {

return employeeInfoAgent.run(query); // delegates to sub-agent

}

The employeeInfoAgent is wrapped and served via an MCP server. A higher-level HR Agent, equipped with its own MCP client, connects to http://employee-mcp-service:8080 and calls the tool as if it were a simple database lookup. The sub-agent can stream partial answers, request clarification, or even hand back artifacts (reports, JSON payloads).

Google’s Agent Development Kit (ADK) takes the same approach, allowing developers to register entire specialized agents as MCP tools. A “Root Agent” can dynamically discover and delegate to a “Database Agent,” a “Legal Review Agent,” or a “Reporting Agent” without hard-coded integrations. The Medium article by Yi Ai demonstrates this pattern producing production-grade multi-agent systems with dramatically reduced complexity.

Key advantages over traditional message queues or custom APIs:

Dynamic discovery: Agents announce new capabilities at runtime.

Rich context sharing: Pass files, conversation history, or even prompt templates.

Real-time streaming: Partial results, progress bars, and live updates.

Security and governance: OAuth scopes limit what each agent can access; audit logs are built-in.

Framework agnostic: Works whether your agents run on Claude, Gemini, Llama, or custom models.

Real-World Use Cases and Benefits

Enterprise teams are already using MCP-powered agent networks for:

Customer support orchestration: A triage agent routes queries to specialized product agents via MCP calls.

Software development: Coding agents query GitHub, run tests, and consult documentation agents—all through MCP.

Financial analysis: One agent pulls market data (via tool), another performs compliance checks (via sub-agent), and a third generates reports.

Internal HR workflows: The example above—HR Agent delegates employee queries without exposing sensitive data directly.

Benefits are compelling. Development time drops because you reuse the same client library everywhere. Scalability improves because agents become microservices with clear contracts. Debugging becomes easier with standardized logs and streaming traces. And because MCP is open-source with SDKs in every major language, vendor lock-in disappears.

Early adopters including Block, Apollo, Replit, and Sourcegraph report faster iteration and fewer integration bugs. AWS’s contribution to the steering committee and deployment guides for running MCP servers on Lambda or ECS further accelerate enterprise adoption.

MCP vs. A2A and Complementary Protocols

It’s important to clarify the landscape. Google’s Agent-to-Agent (A2A) protocol (announced April 2025) focuses explicitly on agent discovery, task delegation, and artifacts between peer agents. MCP, by contrast, started as agent-to-tool/context and evolved to support A2A through the Agent-as-a-Tool pattern.

Many teams use both: MCP for connecting to data/tools and internal agent subroutines, A2A for cross-organizational or highly asynchronous collaboration. A third protocol, ACP (Agent Communication Protocol) from IBM and the Linux Foundation, adds semantic message types and intent modeling for even richer dialogue.

The consensus in 2026: MCP is the practical starting point for most teams because it’s mature, widely supported, and already solves 80% of agent communication needs.

Getting Started with MCP

Ready to try it? The official quickstart at modelcontextprotocol.io takes under 30 minutes:

Install an SDK (e.g., pip install mcp for Python).

Expose a simple tool or wrap an existing agent as an MCP server.

Embed an MCP client in your main agent and connect.

Test with the official Inspector tool for visual debugging.

Sample repositories and pre-built servers for GitHub, Slack, Postgres, and Google Drive are available on GitHub. For production, add OAuth and deploy behind your existing auth infrastructure.

The Road Ahead

As agentic AI moves from prototypes to mission-critical systems, standardized communication protocols like MCP will be as foundational as HTTP was for the web. The ecosystem is exploding: more SDKs, managed MCP hosting services, and even MCP-native orchestration frameworks are arriving monthly. AWS, Google, Anthropic, and dozens of others are actively evolving the spec—adding better human-in-the-loop flows, advanced streaming, and cross-agent memory.

For developers and architects, the message is clear: stop building one-off agent integrations. Adopt MCP today and future-proof your multi-agent systems for the collaborative AI era.

In a world where agents will soon outnumber human developers in many workflows, MCP isn’t just a protocol—it’s the universal language that lets them work together as a true team.

Whether you’re experimenting with a two-agent research prototype or architecting an enterprise fleet of 50 specialized agents, MCP provides the clean, open, and powerful foundation you need for reliable agent-to-agent communication. The future of AI is multi-agent—and MCP is already making that future possible.